Concurrency Prerequisites

1. Program¶

A program is passive code on disk.

- Example:

app.py - Stored as:

- Instructions

- Static data

- Has no execution, no state, no resources

Until loaded, it cannot do anything.

2. Process¶

A process is a running instance of a program.

When you run:

python app.py

The OS creates a process:

- Private virtual address space

- File descriptors

- Heap, stack

- PID

- Signal handlers

Key property¶

Processes are isolated from each other.

They can only communicate via:

- Files

- Pipes

- Sockets

- Shared memory (explicit)

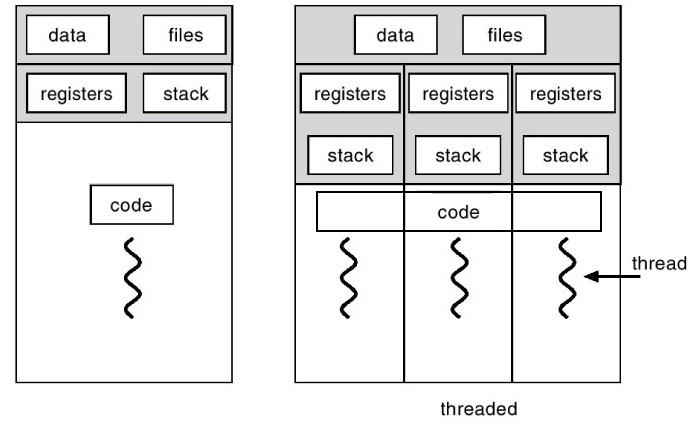

3. Thread¶

A thread is a path of execution inside a process.

A process may have:

- One thread (single-threaded)

- Many threads (multi-threaded)

Threads in a process:

- Share memory

- Share file descriptors

- Share heap

- Have separate stacks

📌 This is why threads need locks.

4. Concurrency (system-level)¶

Concurrency means multiple execution paths overlap in time.

This does not require multiple CPUs.

On a single CPU:¶

- OS context-switches between threads

- Each makes progress

- Interleaving occurs

On multiple CPUs:¶

- Threads literally run in parallel

Important distinction¶

| Term | Meaning |

|---|---|

| Concurrency | Overlap in time |

| Parallelism | Simultaneous execution |

Concurrency ⇒ correctness problem Parallelism ⇒ performance opportunity

5. Why concurrency is hard¶

Because of:

- Shared memory

- Shared files

- Shared I/O

- Non-deterministic scheduling

Classic problem:

read → modify → write

interleaved between threads → corruption.

6. Concurrency at OS vs application¶

OS guarantees¶

- Filesystem integrity

- Process isolation

- Atomic syscalls (limited)

OS does NOT guarantee¶

- Logical correctness

- Application invariants

- Ordering across syscalls

📌 That’s the programmer’s job.

7. Concurrency in web servers (general)¶

A web server:

- Accepts many connections

- Must handle them concurrently

- Cannot block on one request

Common models:

- Process-per-request

- Thread-per-request

- Event-loop (async)

8. Concurrency in FastAPI (important)¶

FastAPI handlers execute concurrently by default.

This surprises many people.

Why?¶

FastAPI runs on ASGI servers (e.g., Uvicorn).

ASGI is designed for concurrency.

8.1 async def endpoints¶

@app.get("/")

async def handler():

...

- Runs in an event loop

- Multiple requests interleave at

awaitpoints - No automatic serialization

Even without await, scheduling still overlaps.

8.2 def endpoints¶

@app.get("/")

def handler():

...

- Executed in a thread pool

- Multiple threads run simultaneously

- Share memory

📌 This is real multi-threading.

8.3 Multiple workers¶

uvicorn app:app --workers 4

- 4 OS processes

- No shared memory

- All may write the same files

This is the most dangerous mode for storage engines.

9. Concurrency in FastAPI (summary table)¶

| Level | Concurrency |

|---|---|

| Requests | Concurrent |

| Handlers | Concurrent |

| Threads | Yes (sync endpoints) |

| Event loop | Yes (async endpoints) |

| Processes | Optional (--workers) |

10. Why this matters for your storage engine¶

Your FastAPI app:

- Has shared memory (

KEY_OFFSET_MAP) - Has shared file (

database.bin) - Has concurrent handlers

Without synchronization:

- Duplicate offsets

- Torn reads

- Lost updates

- Corruption

FastAPI assumes stateless handlers.

Storage engines are stateful.

That mismatch is the core issue.

11. One-line mental model¶

Programs are passive. Processes own resources. Threads interleave execution. FastAPI makes concurrency the default.

Context managers¶

from contextlib import contextmanager

@contextmanager

def visit(dest):

print("ticket check")

print(f"fly to {dest}")

try:

yield

finally:

print("fly back")

with visit("paris"):

print("yay effile tower!")

ticket check fly to paris yay effile tower! fly back

References¶

- [ChatGPT: Give me a brief idea about program, process, threads, concurrency at system level, concurrency at play in fastapi]

- Python concurrency - Real Python blog

- Python Threading - Real Python blog

- Python Thread Safety - Real Python blog

- Python with statement - Real Python blog

- Little book of Semagphores - book